In the rapidly evolving landscape of artificial intelligence and automation, Kimi K2.5 has emerged as a noteworthy contender, noteworthy for both its capabilities and its strategic positioning against established players like OpenAI and Anthropic. The recent evaluations have yielded several insights into Kimi K2.5’s performance, especially in agentic tasks, multimodality support, cost efficiency, token usage, and overall model architecture. These insights can help leaders and automation specialists sift through the noise of AI offerings to make informed decisions regarding tool selection and deployment.

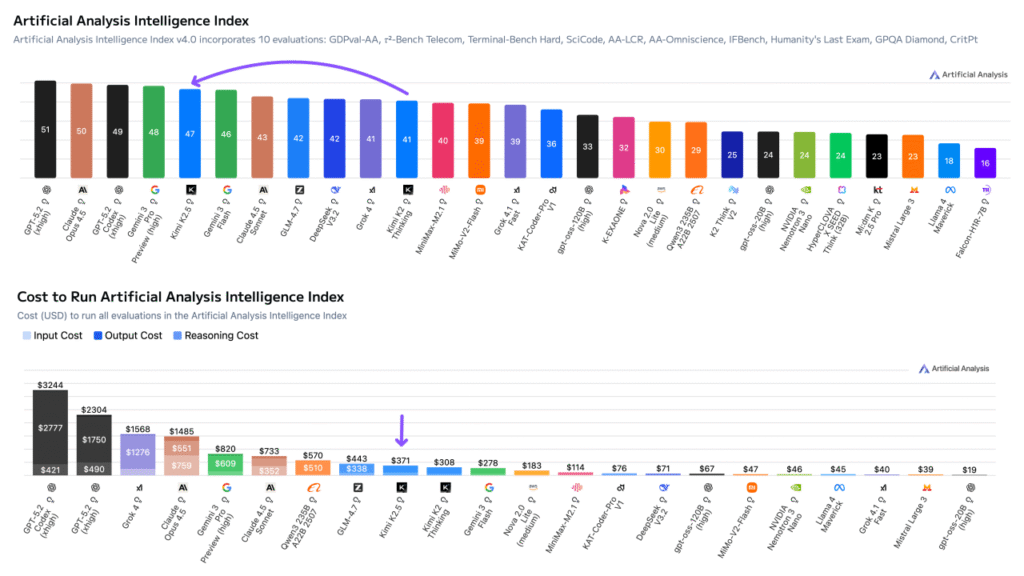

One of the most critical metrics for evaluating AI models is their performance on agentic tasks, which encompass a range of realistic knowledge work. Kimi K2.5 has achieved an Elo score of 1309 on the GDPval-AA evaluation, putting it behind only the models from OpenAI and Anthropic, yet ahead of other models like GLM-4.7 and DeepSeek V3.2. This level of performance indicates strong capabilities in essential tasks such as data analysis and presentation preparation. The agentic harness, Stirrup, offers a unique environment that allows models to operate with shell access and web browsing capabilities, thereby enhancing their effectiveness in real-world applications.

Another cornerstone of Kimi K2.5’s offering is its native support for multimodal inputs, marking a considerable leap over its predecessors and other open-weight models. For the first time, image and video inputs can be recognized alongside text, effectively removing the conventional limitations that were a significant barrier for wider adoption of open-weight models compared to proprietary systems. This multimodality positions Kimi K2.5 competitively against other open-weight offerings, scoring an impressive 75% on the MMMU Pro visual reasoning benchmark. While it may lag slightly behind models like Gemini 3 Pro, it is comparable to GPT-5.2 and Claude Opus 4.5, making it a versatile choice for tasks requiring integrated data formats.

Cost considerations also play a vital role in platform selection. Kimi K2.5 presents a moderate operational cost of $371 on the Cost to Run Artificial Analysis Intelligence Index. While this is significantly more affordable than Claude Opus 4.5 and GPT-5.2—over four times cheaper—it also presents a higher cost than alternatives like DeepSeek V3.2. For businesses, particularly small to medium-sized enterprises (SMEs) aiming to leverage AI, these figures are essential in assessing total cost of ownership and return on investment (ROI). Given the advanced capabilities Kimi K2.5 offers at a more moderate price point compared to its higher-tier competitors, it could become the go-to option for enterprises seeking both performance and cost efficiency.

Kimi K2.5’s token usage is relatively moderate, requiring approximately 82 million reasoning tokens for evaluations. This usage aligns with the expectations for models in similar intelligence tiers and is notably lower than other options such as GLM 4.7, which necessitates around 160 million reasoning tokens. Lower token usage not only translates into direct cost savings during deployment but also suggests a more efficient operational model for ongoing scale-up scenarios. Though slightly more intensive than Kimi K2 Thinking, this balance between effectiveness and efficiency can enhance scalability for businesses looking to integrate AI-driven processes.

From a technical standpoint, Kimi K2.5 employs a mixture of experts (MoE) model architecture, comprising a total of 1 trillion parameters—32 billion of which are active. The shift to native INT4 precision rather than FP8/BF16 contributes to its more manageable size of approximately 595GB. This advancement not only enhances operational efficiency but also supports easier integration within existing infrastructure, thereby highlighting the model’s scalability potential.

Another critical aspect for developers and specialists is the model’s hallucination rate, which Kimi K2.5 has remarkably reduced to 64%. This is a significant improvement over its predecessor, Kimi K2 Thinking, and provides assurance in its reliability for accurate data generation. The calculated score of -11 on the AA-Omniscience Index suggests that Kimi K2.5 has a higher propensity to refrain from generating false information when uncertain. Such robustness is essential for real-world applications where stakes are high, and the need for accuracy is non-negotiable.

Overall, Kimi K2.5’s position between the US and Chinese AI models widens the gap as it continues to reinforce its leadership in open weights model intelligence. With an Elo score suggesting a 66% win rate against the prior leader, GLM-4.7, Kimi K2.5’s advancements make it a substantial player in a market crowded with competing solutions.

In conclusion, Kimi K2.5 offers a compelling blend of performance, cost efficiency, and scalability that positions it well against both established models like OpenAI’s offerings and newer entrants. For SMB leaders and automation specialists, the choice between platforms hinges not just on immediate capabilities but also on long-term ROI and adaptability. The Kimi K2.5’s strengths in agentic tasks, multimodality, and operational efficiency make it a worthy consideration for businesses looking to future-proof their automation strategies.

FlowMind AI Insight: As the AI landscape continues to mature, tools like Kimi K2.5 illuminate new paths for businesses aiming to harness advanced capabilities without sacrificing cost efficiency. Strategic adoption of such technologies can redefine operational paradigms and enhance organizational agility in an increasingly competitive marketplace.

Original article: Read here

2026-01-27 20:48:00