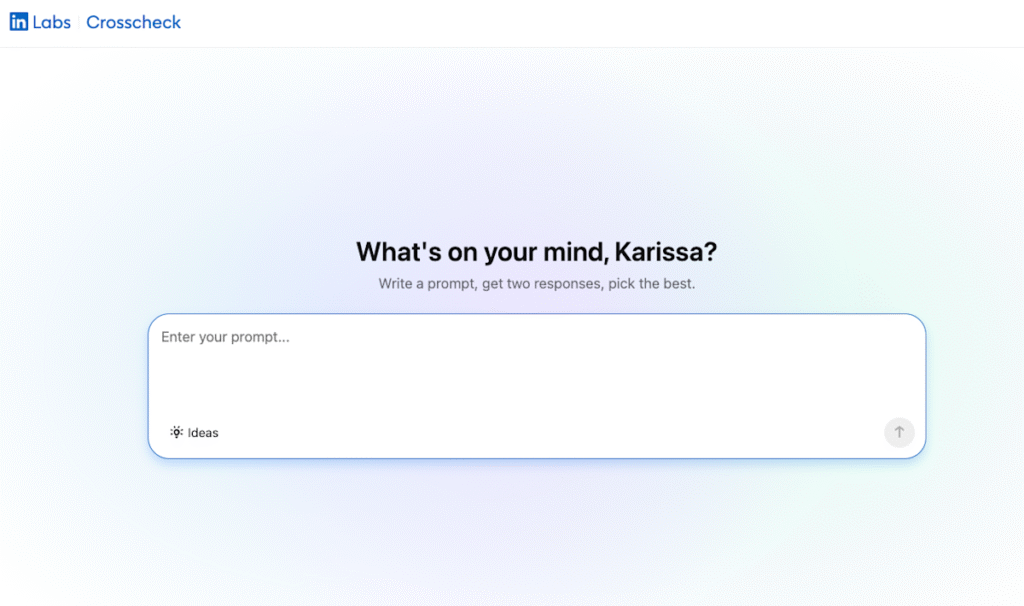

LinkedIn, a prominent professional network, has recently launched a feature called Crosscheck that enables users to experiment with various AI models without the constraints of token limits or subscription costs typical of many AI platforms. This innovative approach allows users, particularly professionals with LinkedIn Premium accounts in the United States, to engage in “blind taste tests” of AI responses, thereby promoting data-informed decision-making in AI tool selection.

The Crosscheck feature offers users a unique experience, wherein they input a prompt and receive two answers generated by different AI models. Only after making a selection do users discover which model provided which response. This methodology not only enhances user engagement by allowing them to explore AI capabilities intuitively but also serves to demystify the decision-making process prevalent in the deployment of AI tools. In a market where several companies—including OpenAI, Anthropic, Google, Microsoft, and others—are vying for attention, the ability to see model performance at a granular level presents a strategic opportunity for professionals to assess AI platforms objectively.

For SMB leaders and automation specialists, the comparative analysis of these AI models becomes essential when choosing the right tools for their respective organizations. Consider the strengths and weaknesses of leading AI platforms. OpenAI, renowned for its versatile language models, excels in generating human-like text and exhibits strong natural language understanding. Its API, focused on producing creative content, conversational AI, and automated customer support solutions, is another drawing point; however, cost can be a significant factor. Depending on usage frequency, the pricing model can escalate, causing budget constraints for smaller enterprises.

Conversely, Anthropic, with its emphasis on AI safety, represents an intriguing alternative. While its models may not possess the same level of creativity as OpenAI’s, they prioritize ethical considerations and reliability, making them less prone to generating harmful or misleading content. Therefore, organizations that value ethical AI deployment may perceive Anthropic as a more favorable option, thereby positioning it as an excellent tool for industries with stringent compliance and ethical requirements.

When analyzing costs, one must not overlook the hidden expenses of model training, operational uptime, and ancillary costs associated with third-party service integrations. Platforms like Zapier and Make lay the groundwork for automating workflows across multiple applications. They excel in quick integrations, but their costs can accumulate, particularly for SMBs that might require extensive operations. Make’s more flexible pricing structure can be appealing for companies focused on specific automations, but it demands a level of technical skill that might not be readily available in smaller organizations.

As the Crosscheck feature on LinkedIn develops, its capacity to aggregate and anonymize usage data across its platform is valuable for model builders. The insights gleaned could enhance model performance based on real-world application reviews across various sectors. This capability creates an ecosystem where feedback loops help refine AI tools, ultimately leading to improved ROI for users that continuously iterate their operational processes. Thus, organizations investing in AI tools can expect a compounding return on investment as their chosen models adapt to their specific needs.

Scalability is another critical consideration when choosing an AI tool. OpenAI provides a wide range of applications, allowing for scale across multiple departments, making it an attractive option for organizations planning to expand. Yet, its models’ performance can vary depending on the task complexity and the training data used. In contrast, Anthropic’s emphasis on safe and interpretable AI may appeal to industries that prioritize stability in their scaling activities, even if the breadth of application is narrower.

Ultimately, as organizations continue exploring the potential of AI, platforms offering features that align closely with user needs will likely emerge as leaders. The Crosscheck feature on LinkedIn exemplifies a forward-thinking approach that enhances user engagement and drives intelligent comparisons across AI models. By closely monitoring model performance through user interaction data, LinkedIn may significantly impact how SMB leaders choose their automation tools in the future.

In conclusion, while evaluating the comparative strengths, weaknesses, costs, ROI, and scalability of AI and automation platforms, leadership should remain attuned to user experiences like those facilitated by LinkedIn’s Crosscheck. By adopting such strategies, organizations can more effectively navigate the increasingly complex landscape of AI technologies.

FlowMind AI Insight: The Crosscheck platform not only democratizes access to AI model evaluations but also fosters a collaborative environment for AI companies to refine their offerings. As organizations leverage these insights, they stand to benefit from heightened efficiency and tailored AI solutions that align with their operational objectives.

Original article: Read here

2026-04-20 18:39:00